LoadTester

LoadTester runs lightning-fast HTTP and API load tests from your browser or CI/CD pipeline with zero infrastructure to manage.

Visit

About LoadTester

LoadTester is a modern, cloud-native HTTP and API load testing tool built by Cloud Native d.o.o. for engineering teams that need repeatable, high-speed performance checks without the overhead of managing infrastructure. It lets you run distributed load tests directly from your browser or CI/CD pipeline, delivering live analytics, p95/error-rate thresholds, scheduled baselines, and run-to-run comparisons — all in seconds. Whether you are a solo developer validating an endpoint or a platform team running nightly release gates, LoadTester removes the painful parts of performance testing. You create a test, choose virtual users or requests per second, and watch live throughput, latency, and error rates update in real time. After the run, you review clean results, export PDF/CSV/JSON reports, compare runs against baselines, and catch performance regressions before your users ever notice. With a cold start under 3 seconds, support for up to 10,000 virtual users and 10,000 requests per second, and a 99.8% typical success rate, LoadTester is built for speed and reliability. It includes exports, scheduled tests, API access, and workflow integrations for teams that want simple, repeatable performance checks. No bloated setup, no platform ceremony — just fast, actionable load testing.

Features of LoadTester

Instant Execution, Distributed by Default

Start distributed load tests in seconds with zero infrastructure setup. LoadTester automatically dispatches workers, scales them for your test, and eliminates scheduling headaches. You focus on results — we handle worker scaling, infrastructure coordination, and execution flow. Cold start is under 3 seconds, and tests begin streaming data immediately.

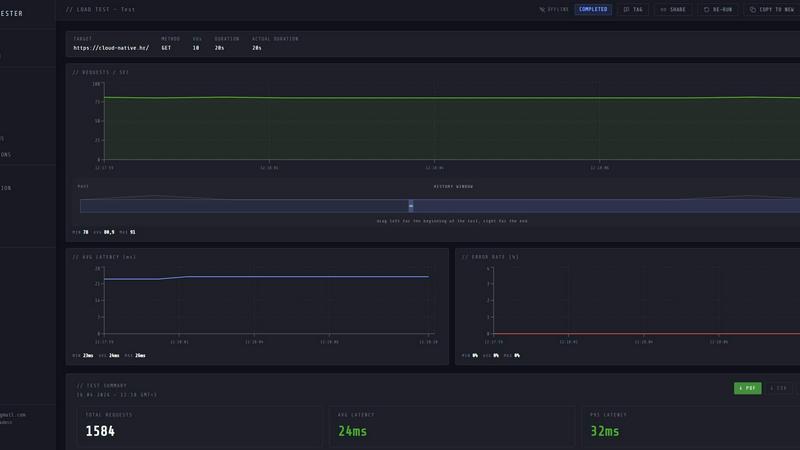

Live Analytics and Telemetry

Watch requests, latency, failures, throughput, and bottlenecks in real time while your test is running. Live charts update continuously with RPS, p50, p95, p99 latency, active virtual users, and error counts. You see performance data as it happens, not five minutes after the run ends, enabling instant feedback and rapid iteration.

Smart Auto-Stop with Thresholds

Set failure or latency thresholds and automatically stop tests when things go sideways. Configure p95 latency limits, error rate caps, and regression alerts that notify Slack and email when a run exceeds baselines by 15% or more. Webhooks on completion let you integrate results directly into your release pipeline, so you ship with confidence.

CI/CD Ready with Webhooks and Alerts

Run tests on every deploy with seamless CI/CD integration. LoadTester fits into your existing workflow through API access, scheduled tests, and webhook hooks. Automatically trigger load tests after deployments, compare results against historical baselines, and alert your team via Slack or email if performance regressions appear.

Use Cases of LoadTester

Spike Testing for E-Commerce Checkout

Simulate a sudden surge of traffic to your checkout endpoint during a flash sale. Set the test to 500 requests per second for 300 seconds, watch live RPS and p95 latency, and automatically stop the test if error rates exceed 2%. Review the clean summary of total requests, average latency, and data throughput to confirm your infrastructure can handle peak load.

Baseline Performance Monitoring for APIs

Run a nightly baseline test against your core API endpoints, such as authentication or search. Schedule the test to execute daily at 02:00 UTC, compare each run against the previous baseline, and get immediate Slack alerts if p95 latency increases by more than 15%. Catch regressions before your users notice any slowdown.

Release Gate Validation in CI/CD

Integrate LoadTester into your CI/CD pipeline to automatically run a load test before every production deployment. Use webhooks to trigger the test, set thresholds for p95 latency under 400ms and error rate under 2%, and block the release if thresholds are breached. Ensure every deploy maintains performance standards.

Capacity Planning for New Features

When launching a new feature endpoint, run a ramp-up test to determine the maximum throughput your service can handle. Gradually increase virtual users from 0 to 10,000 while monitoring latency distribution and error rates. Export the results as JSON or CSV to share with your infrastructure team for capacity planning discussions.

Frequently Asked Questions

How quickly can I start my first load test?

You can start your first load test in under 3 seconds from the moment you click run. LoadTester has a cold start of less than 3 seconds to first request, with no infrastructure to provision. Just create a test, set your target URL and rate, and hit launch.

What is the maximum scale LoadTester supports?

LoadTester supports up to 10,000 virtual users (VUs) and 10,000 requests per second (RPS) per test. Workers auto-scale to handle your test load, and the system is built for scale, performance, and reliability. For larger requirements, contact the team for custom plans.

Can I compare test runs against previous results?

Yes, LoadTester includes run-to-run comparisons and regression detection. You can set baselines for each test and automatically compare new runs against those baselines. Alerts trigger when p95 latency increases by 15% or more, helping you catch performance regressions immediately.

What integrations and exports are available?

LoadTester supports CI/CD integration via API access and webhooks, scheduled tests, and Slack and email alerts. You can export results as PDF, CSV, or JSON for sharing with your team. Webhooks on completion let you post result links to release bots or other workflow tools.

Similar to LoadTester

ProcessSpy is a powerful macOS tool that provides in-depth process monitoring with advanced filtering, real-time insights, and seamless performance.

Give your AI agent its own iMessage number for instant, seamless communication from any platform.

Datamata Studios empowers developers with free tools and market insights to automate workflows and enhance skill development effortlessly.

Requestly is a fast, git-based API client that simplifies testing and collaboration with no login required, making your workflow seamless.

OpenMark AI quickly benchmarks 100+ LLMs for your tasks, revealing the best model based on cost, speed, quality, and stability.

OGimagen quickly generates stunning Open Graph images and meta tags for social media, enhancing your content in seconds.

Scale QA with AI agents while keeping full control and governance.

Blueberry is an all-in-one Mac app that streamlines coding, terminal tasks, and browsing for efficient web app.