Agenta vs Fallom

Side-by-side comparison to help you choose the right product.

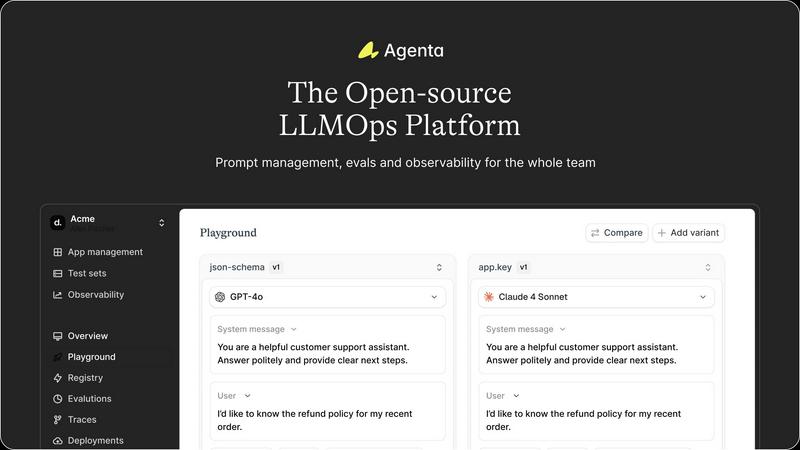

Agenta is the open-source LLMOps platform that streamlines AI app development with centralized collaboration and.

Last updated: March 1, 2026

Fallom delivers instant, real-time observability for LLM calls and agent interactions, ensuring efficient tracking and.

Last updated: February 28, 2026

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Prompt Management

Agenta centralizes all prompts, evaluations, and traces on one platform. This eliminates the chaos of scattered workflows, allowing teams to focus on prompt optimization and collaboration without losing valuable insights.

Automated Evaluation Process

Agenta introduces an automated evaluation process that replaces guesswork with evidence-based decision-making. Teams can systematically run experiments, track results, and validate every change, ensuring reliable performance improvements.

Unified Playground

The unified playground allows teams to compare prompts and models side-by-side. This feature enables quick iterations and testing, ensuring that errors can be identified and corrected efficiently, leading to enhanced product quality.

Comprehensive Observability

With comprehensive observability features, Agenta allows teams to trace every request and pinpoint failure points easily. This functionality enhances debugging capabilities, enabling teams to gather user feedback and monitor system performance in real-time.

Fallom

AI Native Observability

Fallom offers real-time observability for AI agents, allowing teams to track every function call, analyze timing, and debug issues with confidence. This feature ensures that you never miss a beat in your AI operations.

Cost Attribution

With Fallom's cost attribution feature, you can meticulously track expenditures per model, user, and team. This functionality provides full transparency for budgeting and chargeback, helping organizations manage their AI budgets effectively.

Compliance Ready

Fallom is built with compliance in mind, featuring complete audit trails that support various regulatory requirements such as the EU AI Act, SOC 2, and GDPR. This ensures that your AI operations are not only efficient but also compliant with necessary legal standards.

Tool Call Visibility

Enjoy complete visibility into every tool call made by your agents. Fallom tracks arguments, results, and the entire execution path, enabling teams to debug complex workflows and enhance overall performance.

Use Cases

Agenta

Rapid Prototyping of AI Applications

Agenta facilitates rapid prototyping by allowing teams to experiment with various prompts and models simultaneously. This accelerates the development cycle, enabling faster deployment of AI features with higher confidence in their effectiveness.

Cross-Functional Collaboration

Teams can collaborate effectively through Agenta's integrated platform. Product managers, developers, and domain experts can work together seamlessly, reducing silos and enhancing communication throughout the LLM development process.

Error Resolution and Debugging

When issues arise in production, Agenta makes it easy to trace and annotate errors. Teams can turn any trace into a test with a single click, streamlining the debugging process and closing the feedback loop quickly.

Performance Monitoring and Improvement

Agenta supports continuous performance monitoring through live, online evaluations. This allows teams to detect regressions and systematically improve their LLM applications, ensuring that they meet user expectations consistently.

Fallom

Debugging AI Workflows

Fallom is an invaluable tool for debugging complex multi-step AI workflows. It provides detailed insights into each step of the process, allowing teams to identify and resolve issues quickly and efficiently.

Cost Management

Use Fallom to manage and attribute costs associated with AI models. By tracking spend across different models and teams, organizations can optimize their AI budgets and make informed financial decisions.

Regulatory Compliance

For organizations operating in regulated industries, Fallom ensures that all interactions with LLMs are logged and auditable. This provides peace of mind when it comes to compliance with laws and regulations.

Performance Monitoring

Fallom's real-time dashboard allows teams to monitor LLM usage live, identify anomalies, and take preemptive action before they escalate into significant issues. This proactive approach enhances overall system reliability.

Overview

About Agenta

Agenta is the open-source LLMOps platform specifically designed to transform the way AI teams develop and deploy large language models (LLMs). By addressing the chaos and unpredictability that often accompany LLM development, Agenta provides a structured environment that promotes collaboration among developers, product managers, and domain experts. Its primary focus is on streamlining the LLM lifecycle, enabling teams to swiftly iterate on prompts, validate changes, and debug issues effectively. Agenta centralizes prompt management, automated evaluations, and production observability into a unified workflow, significantly reducing time-to-production while enhancing the reliability and performance of AI applications. Model-agnostic and framework-friendly, Agenta integrates seamlessly into existing tech stacks, empowering teams to build robust AI products without the risk of vendor lock-in. The platform serves as the essential infrastructure for teams eager to accelerate their LLM development journey, ensuring that experimentation leads to reliable, shipped applications.

About Fallom

Fallom is the cutting-edge observability platform designed specifically for teams working with production-level large language models (LLMs) and AI agent applications. With its lightning-fast performance and AI-native architecture, Fallom simplifies the complexities of modern AI stacks, offering unparalleled visibility into every single LLM call. In less than five minutes, users can integrate the OpenTelemetry-native SDK into their applications to gain real-time insights into prompts, outputs, tool calls, tokens, latency, and per-call costs. This powerful tool is tailored for engineering and product teams, enabling them to monitor live usage, debug intricate multi-step workflows, and accurately allocate AI expenditures across different models, users, and teams. Fallom serves as an enterprise-ready command center for AI operations, providing essential session-level context, timing waterfalls, and comprehensive audit trails, all of which are necessary for compliance and effective scaling.

Frequently Asked Questions

Agenta FAQ

What types of teams can benefit from Agenta?

Agenta is designed for cross-functional teams, including developers, product managers, and domain experts, who are involved in LLM development and deployment.

Is Agenta compatible with existing tech stacks?

Yes, Agenta is model-agnostic and framework-friendly, allowing seamless integration with your current tools and systems without any vendor lock-in.

How does Agenta enhance collaboration among team members?

Agenta provides a unified platform where prompts, evaluations, and traces are centralized, fostering collaboration among team members and ensuring everyone has access to the same information.

Can I use Agenta for both development and production environments?

Absolutely! Agenta is built to support the entire LLM lifecycle, from experimentation during development to robust observability and monitoring in production, ensuring reliable AI application performance.

Fallom FAQ

How quickly can I set up Fallom?

You can set up Fallom in under five minutes by integrating a single OpenTelemetry-native SDK into your application, enabling you to start tracing immediately.

Is Fallom compatible with all AI models?

Yes, Fallom is designed to work with every AI provider and model, thanks to its OpenTelemetry-native architecture that ensures compatibility and eliminates vendor lock-in.

How does Fallom support compliance?

Fallom provides comprehensive audit trails, input/output logging, model versioning, and consent tracking, ensuring that your AI operations meet various regulatory requirements.

Can I monitor multiple users or sessions with Fallom?

Absolutely! Fallom allows you to group traces by session, user, or customer, giving you complete context and visibility into your AI operations.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform specifically designed to accelerate AI app development. It addresses the inefficiencies and unpredictability common in the LLM lifecycle, providing a centralized hub for experimentation, evaluation, and deployment. Teams often seek alternatives to Agenta due to various factors such as pricing, feature sets, or specific platform integration needs, as well as a desire for enhanced collaboration and productivity. When choosing an alternative, users should consider the platform's ability to streamline workflows, support for cross-functional collaboration, and the flexibility to integrate with existing tools. It's also essential to evaluate the level of automation provided for testing and performance validation, as these factors can significantly impact time-to-production and overall application reliability.

Fallom Alternatives

Fallom is an AI-native observability platform designed for teams focused on building and scaling production-level language model (LLM) and AI agent applications. By offering lightning-fast tracking of LLM calls and agent interactions, Fallom enables users to gain instant visibility into the complexities of their AI stacks. As demand for advanced observability tools grows, users often seek alternatives due to factors such as pricing, specific feature sets, or compatibility with their existing platforms and workflows. When selecting an alternative, it’s essential to consider the level of real-time visibility, compliance capabilities, and overall integration ease that will best serve your operational needs. --- [{"question": "What is Fallom?", "answer": "Fallom is an AI-native observability platform that tracks LLM calls and agent interactions, providing real-time visibility into AI operations."}, {"question": "Who is Fallom for?", "answer": "Fallom is designed for engineering and product teams building and scaling AI applications, particularly those utilizing language models."}, {"question": "Is Fallom free?", "answer": "Fallom's pricing structure is not specified in the provided content; users should check the official website for detailed pricing information."}, {"question": "What are the main features of Fallom?", "answer": "Fallom offers end-to-end LLM call tracing, enterprise-grade compliance, audit trails, and real-time monitoring of AI interactions."}]