Agent to Agent Testing Platform vs ResponseHub

Side-by-side comparison to help you choose the right product.

Agent to Agent Testing Platform

Validate AI agent performance across chat, voice, and phone interactions to ensure safety, compliance, and reliability.

Last updated: February 27, 2026

ResponseHub

Automate security questionnaires with AI to close deals faster and with total confidence.

Last updated: February 28, 2026

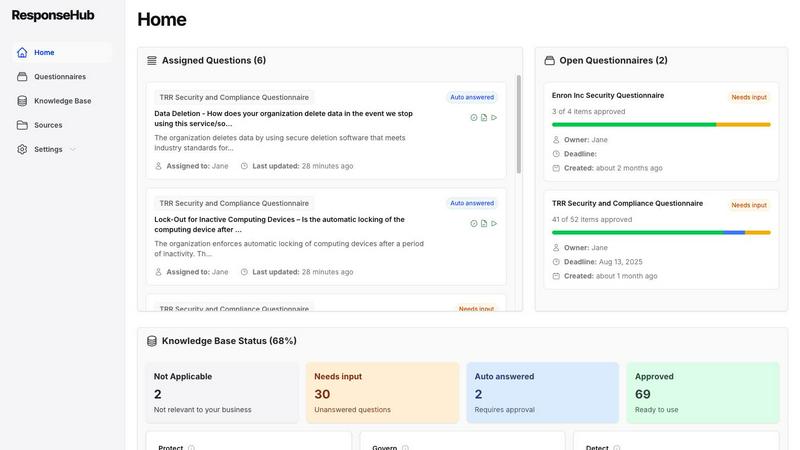

Visual Comparison

Agent to Agent Testing Platform

ResponseHub

Feature Comparison

Agent to Agent Testing Platform

Automated Scenario Generation

The platform automatically generates a diverse range of test cases that simulate various interactions AI agents might encounter, including chat, voice, and hybrid scenarios. This feature ensures comprehensive coverage and robust assessment of AI performance.

True Multi-Modal Understanding

This feature allows users to define detailed requirements or upload product requirement documents (PRDs) that include diverse inputs like images, audio, and video. This helps gauge the expected outputs of AI agents, mirroring real-world interactions.

Diverse Persona Testing

The platform leverages multiple personas to simulate different end-user behaviors and needs, ensuring that AI agents perform effectively for a wide range of user types. This includes testing with personas such as International Caller and Digital Novice to assess adaptability.

Autonomous Testing at Scale

With the ability to analyze agent performance from the perspective of synthetic end-users, this feature evaluates key metrics like effectiveness, accuracy, empathy, and professionalism. It ensures consistent intent, tone, and reasoning across all interactions.

ResponseHub

AI-Powered Questionnaire Parsing

Forget manual data entry. ResponseHub's advanced AI engine effortlessly processes any security questionnaire spreadsheet, no matter how complex. It intelligently identifies and extracts all questions across multiple sheets, handling cover pages and ambiguous column headers with ease, so you can start answering in seconds, not hours.

Automated, Fully-Cited Answer Generation

This is the heart of the platform. ResponseHub's AI doesn't just guess; it analyzes your uploaded source documents (policies, SOPs, product info) and automatically drafts precise answers. Every response is meticulously cited, linking directly to the exact policy, section, page, and sentence, providing undeniable confidence and audit-ready traceability.

Self-Learning Knowledge Base

Your compliance intelligence grows smarter with every use. The platform continuously builds and maintains an automated knowledge base, learning from every completed questionnaire. It suggests new entries and updates existing ones, ensuring your repository is always current and future responses are even faster.

Collaborative Workflow & Delegation

Move faster as a team. Easily assign specific questions to subject matter experts across security, engineering, or legal for their input. Delegate final review and approval, with every change tracked and logged in a clear audit trail. This streamlines collaboration and distributes the workload efficiently.

Use Cases

Agent to Agent Testing Platform

Quality Assurance for AI Deployments

Enterprises can utilize this platform to conduct thorough quality assurance for AI deployments, ensuring that agents meet required standards for bias, toxicity, and overall performance before going live.

Continuous Improvement of AI Agents

The platform supports ongoing testing and evaluation of AI agents, enabling organizations to identify and rectify potential issues over time, thus ensuring a continuously improving user experience.

Training and Fine-Tuning AI Models

By simulating various interaction scenarios, developers can gather insights necessary for training and fine-tuning AI models, leading to better performance and user satisfaction.

Risk Assessment for AI Interactions

Organizations can perform regression testing with risk scoring to identify potential problem areas within their AI agents, allowing them to prioritize critical issues and enhance overall operational efficiency.

ResponseHub

Accelerating Enterprise Sales Cycles

Sales teams can instantly unblock deals stalled by security reviews. Instead of waiting weeks for engineering to manually complete a questionnaire, they get a fully-cited, accurate response in hours. This dramatically speeds up procurement, builds buyer trust, and helps close revenue faster.

Empowering Security & Compliance Teams

Free your security experts from repetitive, low-value manual work. ResponseHub automates the heavy lifting of questionnaire responses, allowing the team to focus on strategic initiatives like threat modeling and policy development while maintaining perfect, consistent audit trails for all external assessments.

Enabling Scalable Startup Growth

For fast-growing startups, manual security reviews are a crippling bottleneck. ResponseHub provides an enterprise-grade compliance response capability from day one, enabling small teams to confidently handle inbound questionnaires from large clients without diverting critical engineering resources from product development.

Streamlining Vendor Risk Management (VRM)

When your company is the one sending out questionnaires, ResponseHub ensures you receive consistent, well-structured, and properly cited responses from your vendors. This standardizes the intake process, making vendor risk analysis faster, more reliable, and less frustrating for all parties involved.

Overview

About Agent to Agent Testing Platform

The Agent to Agent Testing Platform is a revolutionary AI-native quality assurance framework designed specifically for validating the performance of AI agents in real-world scenarios. As AI systems become increasingly autonomous, traditional quality assurance methods are no longer adequate. This platform addresses that gap by offering comprehensive testing that goes beyond simple prompt checks. It evaluates multi-turn conversations across various modalities, including chat, voice, and phone interactions. This ensures that enterprises can assess the performance of their AI agents before deploying them in a production environment. Key metrics such as bias, toxicity, and hallucination are meticulously examined, providing enterprises with the confidence that their AI agents are safe and effective for end-users.

About ResponseHub

ResponseHub is the AI-powered security questionnaire automation platform engineered to obliterate the manual, time-sucking process of vendor security assessments. Built for modern security, sales, and engineering teams, it replaces chaotic spreadsheets with intelligent automation, slashing completion times from days to mere hours. The platform's core genius lies in its ability to ingest complex Excel questionnaires and automatically generate accurate, fully-cited answers by cross-referencing your uploaded policy documents, SOPs, and organizational knowledge. Every single answer comes with 100% traceability, directly linked to the exact sentence, page, and section of your source documentation, eliminating high-stakes guesswork. Continuously learning from every completed assessment, ResponseHub builds and maintains a dynamic, automated knowledge base, making each subsequent questionnaire faster and easier. Designed for lightning speed, its completely self-serve model empowers teams to reclaim critical time, accelerate deal cycles, and move forward with absolute confidence in their security posture.

Frequently Asked Questions

Agent to Agent Testing Platform FAQ

What types of AI agents can be tested with this platform?

The Agent to Agent Testing Platform is designed to test various AI agents, including chatbots, voice assistants, and phone caller agents, across multiple scenarios and modalities.

How does the platform ensure comprehensive testing?

By utilizing automated scenario generation, the platform creates diverse test cases that cover a wide range of interactions, ensuring that all potential edge cases and long-tail failures are addressed.

Can custom testing scenarios be created?

Yes, users have the option to create custom testing scenarios tailored to their specific requirements, in addition to accessing a library of hundreds of predefined scenarios.

What metrics can be evaluated during testing?

The platform evaluates critical metrics such as bias, toxicity, hallucination, effectiveness, accuracy, empathy, professionalism, and more, providing a holistic view of AI agent performance.

ResponseHub FAQ

How does ResponseHub ensure answer accuracy?

Accuracy is guaranteed through direct citation. Our AI does not generate answers from a generic database; it scans your specific, uploaded source documents (policies, SOPs) to find the correct information. Every answer is linked to the exact sentence and page it came from, providing complete traceability and confidence.

What file formats do you support for questionnaires?

ResponseHub is built to handle the reality of security assessments. We specialize in parsing complex Microsoft Excel (.xlsx, .xls) files, which are the industry standard for questionnaires. We expertly manage multi-sheet workbooks, cover sheets, and various formatting challenges automatically.

Do I need pre-written policies to get started?

Not at all. If you have existing policies in PDF or other formats, you can upload them immediately. If you're starting from scratch, ResponseHub includes a free policy generator to help you create essential security documents in minutes, providing a foundation for the AI to work from.

Is the platform truly self-serve?

Yes. You can sign up, start a free trial, and begin automating questionnaires in under five minutes without talking to sales. The platform is designed for you to onboard yourself, upload documents, and experience the value immediately. Premium onboarding support is available for those who want hands-on assistance.

Alternatives

Agent to Agent Testing Platform Alternatives

Agent to Agent Testing Platform is a pioneering AI-native quality assurance framework designed to validate agent behavior across diverse communication channels such as chat, voice, and phone. As organizations increasingly adopt autonomous AI systems, they often find traditional QA models inadequate for handling the complexity of these dynamic interactions. This leads users to seek alternatives that may offer better pricing, additional features, or compatibility with their specific platform needs. When considering alternatives, it's essential to evaluate factors such as the comprehensiveness of testing capabilities, the ability to simulate real-world interactions, and the robustness of compliance and security features. This ensures that the selected platform not only meets current requirements but also scales with future technological advancements.

ResponseHub Alternatives

ResponseHub is an AI-powered platform that automates security questionnaire responses, falling into the category of AI assistants for security and sales enablement. Users often explore alternatives to find a solution that better aligns with their specific budget, desired feature set, or integration needs with existing tech stacks. When evaluating other options, key factors include the AI's accuracy and citation capabilities, the ease of building and maintaining a knowledge base, and the platform's ability to handle complex spreadsheet formats without manual setup. The goal is to find a tool that delivers both speed and unwavering confidence in every security answer.